Object detection

Description of the task

The goal of this workflow is to localize objects in the input image, not requiring a pixel-level class. Common strategies produce either bounding boxes containing the objects or individual points at their center of mass [13], which is the one adopted by BiaPy.

An example of this task is displayed in the figure below, with a fluorescence microscopy image used as input (left) and its corresponding nuclei detection results (rigth). Each red dot in the right image corresponds to a unique predicted object.

Input image (fluorescence microscopy, |

Detected nuclei represented with red dots |

Inputs and outputs

The detection workflows in BiaPy expect a series of folders as input:

Training Raw Images: A folder that contains the unprocessed (single-channel or multi-channel) images that will be used to train the model.

Training CSV files: A folder that contains the CSV files for training, providing the coordinates of the center of each object of interest. The number of these files should align with the number of training raw images.

- [Optional] Test Raw Images: A folder that contains the images to evaluate the model's performance.

- [Optional] Test CSV files: A folder that contains the CSV files with the center of the objects for testing. Again, ensure their count aligns with that of the test raw images.

Upon successful execution, a directory will be generated with the detection results. Therefore, you will need to define:

Output Folder: A designated path to save the detection outcomes.

BiaPy input and output folders for detection. The label folders in this case |

Note

All comma-separated values (CSV) files used in the detection workflow follow the napari point format, so those annotations files can be easily exported from and imported to napari. Here you have an example of the beginning of such file with the 2D coordinates of the objects of interest of its corresponding input image:

Data structure

To ensure the proper operation of the library, the data directory tree should be something like this:

dataset/

├── train

│ ├── raw

│ │ ├── training-0001.tif

│ │ ├── training-0002.tif

│ │ ├── . . .

│ │ └── training-9999.tif

│ └── label

│ ├── training-0001.csv

│ ├── training-0002.csv

│ ├── . . .

│ └── training-9999.csv

└── test

├── raw

│ ├── testing-0001.tif

│ ├── testing-0002.tif

│ ├── . . .

│ └── testing-9999.tif

└── label

├── testing-0001.csv

├── testing-0002.csv

├── . . .

└── testing-9999.csv

In this example, the raw training images are under dataset/train/raw/ and their corresponding CSV files are under dataset/train/label/, while the raw test images are under dataset/test/raw/ and their corresponding CSV files are under dataset/test/label/. This is just an example, you can name your folders as you wish as long as you set the paths correctly later.

Note

In this workflow the name of each input file (with extension .tif in the example above) and its corresponding CSV file must be the same.

Example datasets

Below is a list of publicly available datasets that are ready to be used in BiaPy for object detection:

Example dataset |

Image dimensions |

Link to data |

|---|---|---|

2D |

||

3D |

Minimal configuration

Apart from the input and output folders, there are a few basic parameters that always need to be specified in order to run an detection workflow in BiaPy. Depending on the parameter, they can be defined through the GUI Wizard, in the code-free notebooks, or by editing the YAML configuration file.

Experiment name

Also known as “model name” or “job name”, this will be the name of the current experiment you want to run, so it can be differenciated from other past and future experiments.

Note

Use only my_model -style, not my-model (Use “_” not “-“). Do not use spaces in the name. Avoid using the name of an existing experiment/model/job (saved in the same result folder) as it will be overwritten.

Data management

Validation Set

With the goal to monitor the training process, it is common to use a third dataset called the “Validation Set”. This is a subset of the whole dataset that is used to evaluate the model’s performance and optimize training parameters. This subset will not be directly used for training the model, and thus, when applying the model to these images, we can see if the model is learning the training set’s patterns too specifically or if it is generalizing properly.

Graphical description of data partitions in BiaPy. |

To define such set, there are two options:

Validation proportion/percentage: Select a proportion (or percentage) of your training dataset to be used to validate the network during the training. Usual values are 0.1 (10%) or 0.2 (20%), and the samples of that set will be selected at random.

Validation paths: Similar to the training and test sets, you can select two folders with the validation raw and label images:

Validation Raw Images: A folder that contains the unprocessed (single-channel or multi-channel) images that will be used to select the best model during training.

Validation CSV files: A folder that contains the CSV files for validation.

Test ground-truth

Do you have annotations (CSV files with the object coordinates) for the test set? This is a key question so BiaPy knows if your test set will be used for evaluation in new data (unseen during training) or simply produce predictions on that new data. All workflows contain a parameter to specify this aspect.

Basic training parameters

At the core of each BiaPy workflow there is a deep learning model. Although we try to simplify the number of parameters to tune, these are the basic parameters that need to be defined for training an object detection workflow:

Number of input channels: The number of channels of your raw images (grayscale = 1, RGB = 3). Notice the dimensionality of your images (2D/3D) is set by default depending on the workflow template you select.

Number of epochs: This number indicates how many rounds the network will be trained. On each round, the network usually sees the full training set. The value of this parameter depends on the size and complexity of each dataset. You can start with something like 100 epochs and tune it depending on how fast the loss (error) is reduced.

Patience: This is a number that indicates how many epochs you want to wait without the model improving its results in the validation set to stop training. Again, this value depends on the data you’re working on, but you can start with something like 20.

For improving performance, other advanced parameters can be optimized, for example, the model’s architecture. The architecture assigned as default is the Residual U-Net, as it is effective in object detection tasks. This architecture allows a strong baseline, but further exploration could potentially lead to better results.

Note

Once the parameters are correctly assigned, the training phase can be executed. Note that to train large models effectively the use of a GPU (Graphics Processing Unit) is essential. This hardware accelerator performs parallel computations and has larger RAM memory compared to the CPUs, which enables faster training times.

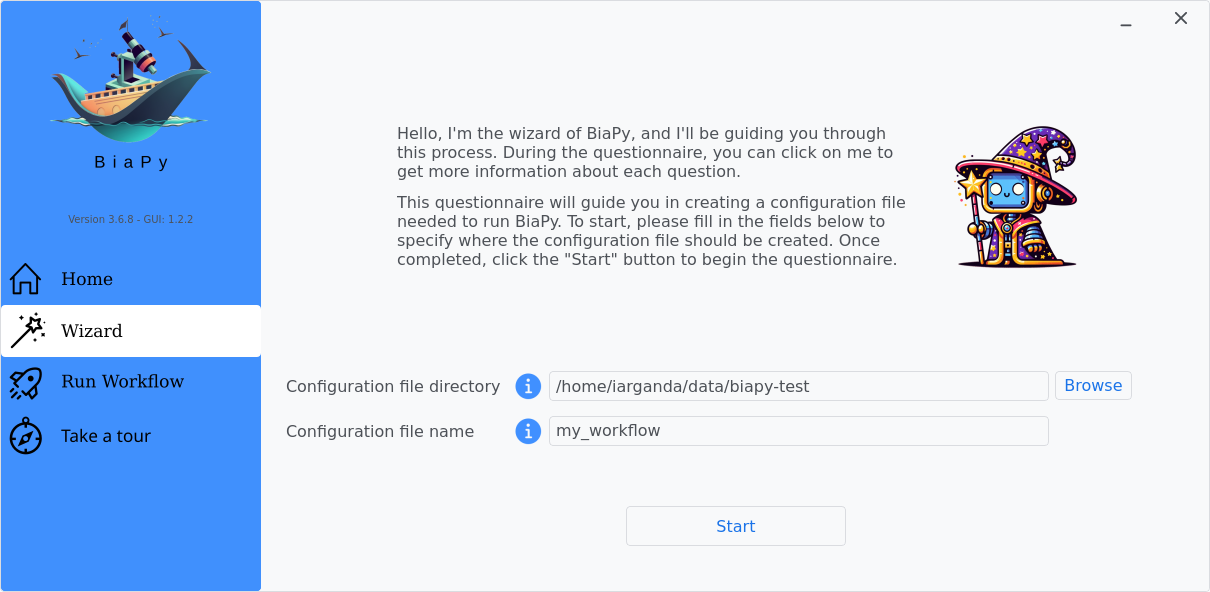

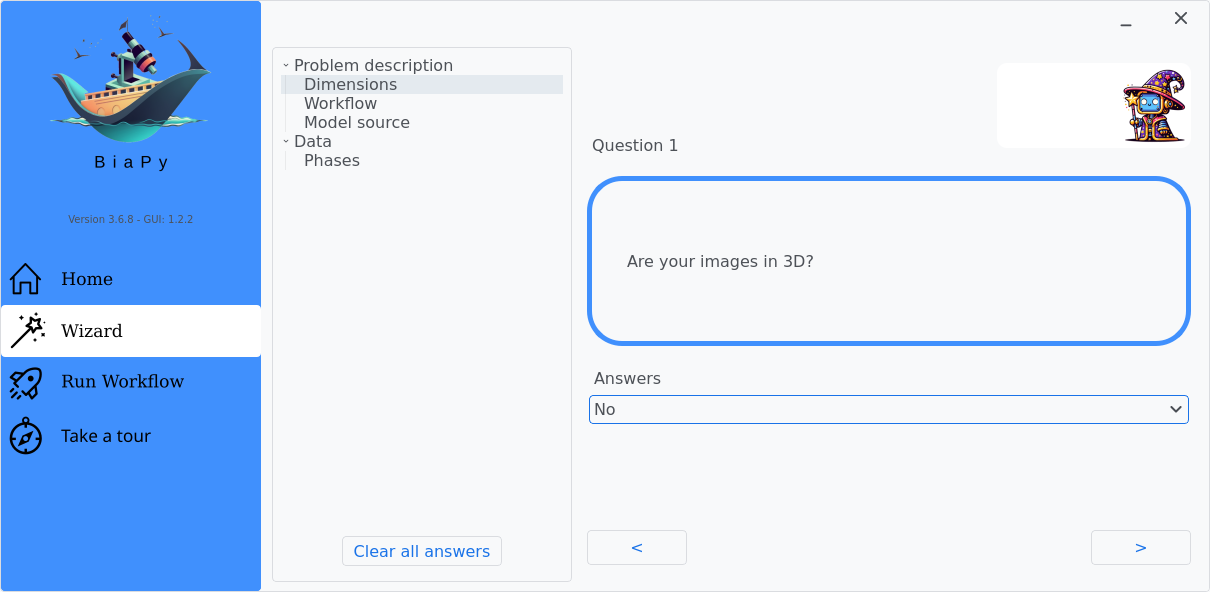

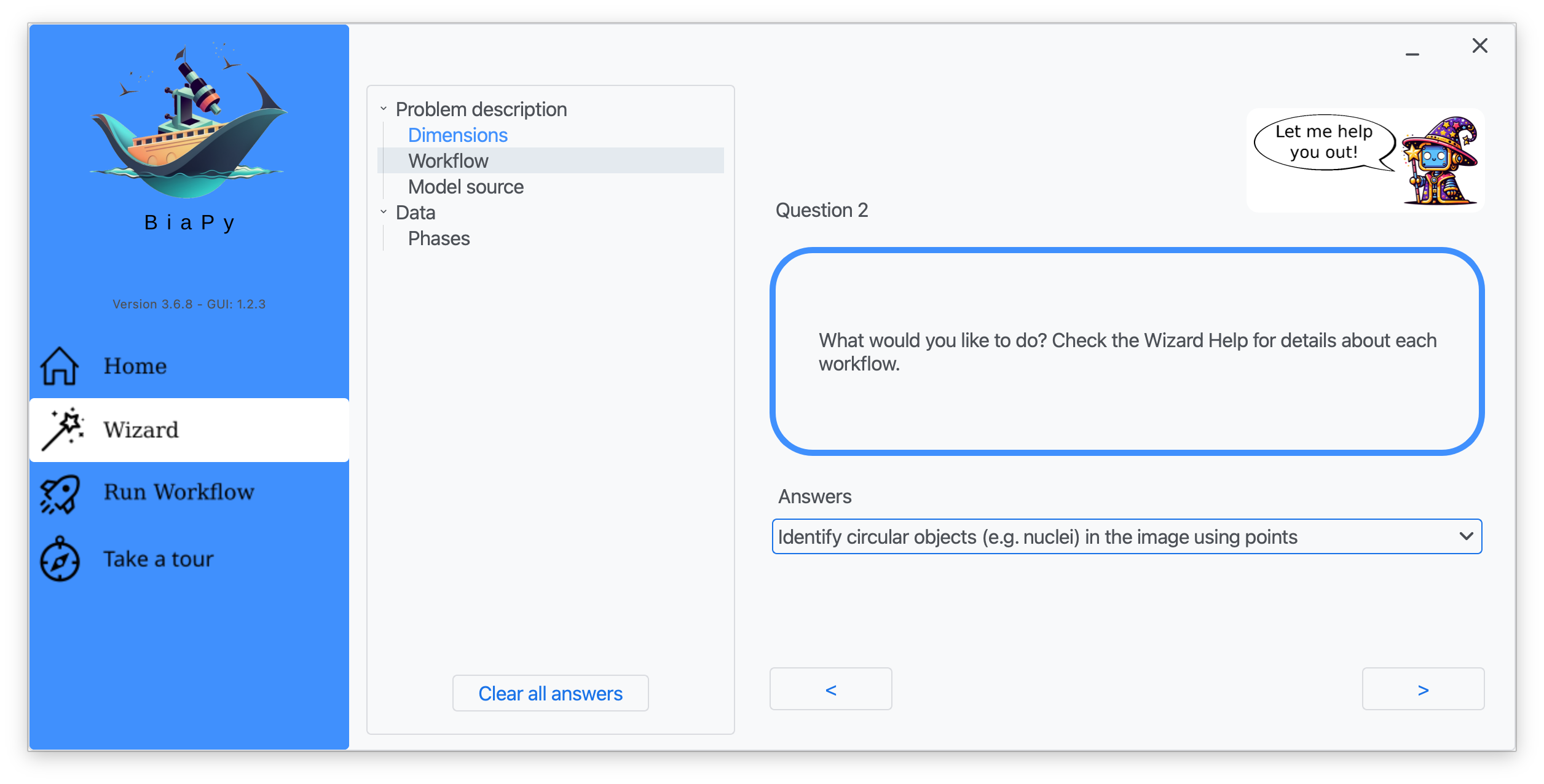

How to run

BiaPy offers different options to run workflows depending on your degree of computer expertise. Select whichever is more approppriate for you:

In the BiaPy GUI, click on the Wizard, then follow the next instructions to select the object detection workflow:

After that, you will be able to edit the parameters of the workflow and run it.

Note

BiaPy’s GUI requires that all data and configuration files reside on the same machine where the GUI is being executed.

Tip

If you need additional help, watch BiaPy’s GUI walkthrough video.

BiaPy offers two code-free notebooks in Google Colab to perform object detection:

Tip

If you need additional help, watch BiaPy’s Notebook walkthrough video.

If you installed BiaPy via Docker, open a terminal as described in Installation. Then, you can use for instance the 2d_detection.yaml template file (or your own YAML configuration file), and run the workflow as follows:

# Configuration file

job_cfg_file=/home/user/2d_detection.yaml

# Path to the data directory

data_dir=/home/user/data

# Where the experiment output directory should be created

result_dir=/home/user/exp_results

# Just a name for the job

job_name=my_2d_detection

# Number that should be increased when one need to run the same job multiple times (reproducibility)

job_counter=1

# Number of the GPU to run the job in (according to 'nvidia-smi' command)

gpu_number=0

docker run --rm \

--gpus "device=$gpu_number" \

--mount type=bind,source=$job_cfg_file,target=$job_cfg_file \

--mount type=bind,source=$result_dir,target=$result_dir \

--mount type=bind,source=$data_dir,target=$data_dir \

biapyx/biapy:latest-11.8 \

biapy \

--config $job_cfg_file \

--result_dir $result_dir \

--name $job_name \

--run_id $job_counter \

--gpu "$gpu_number"

Note

Note that data_dir must contain all the paths DATA.*.PATH and DATA.*.GT_PATH so the container can find them. For instance, if you want to only train in this example DATA.TRAIN.PATH and DATA.TRAIN.GT_PATH could be /home/user/data/train/x and /home/user/data/train/y respectively.

For container versions prior to 3.6.8, the biapy prefix is not required. You can execute the command directly as follows:

docker run --rm \

--gpus "device=$gpu_number" \

--mount type=bind,source=$job_cfg_file,target=$job_cfg_file \

--mount type=bind,source=$result_dir,target=$result_dir \

--mount type=bind,source=$data_dir,target=$data_dir \

biapyx/biapy:3.6.7-11.8 \

--config $job_cfg_file \

--result_dir $result_dir \

--name $job_name \

--run_id $job_counter \

--gpu "$gpu_number"

From a terminal, you can use for instance the 2d_detection.yaml template file (or your own YAML configuration file), and run the workflow as follows:

# Configuration file

job_cfg_file=/home/user/2d_detection.yaml

# Where the experiment output directory should be created

result_dir=/home/user/exp_results

# Just a name for the job

job_name=my_2d_detection

# Number that should be increased when one need to run the same job multiple times (reproducibility)

job_counter=1

# Number of the GPU to run the job in (according to 'nvidia-smi' command)

gpu_number=0

# Load the environment

conda activate BiaPy_env

biapy \

--config $job_cfg_file \

--result_dir $result_dir \

--name $job_name \

--run_id $job_counter \

--gpu "$gpu_number"

For multi-GPU training you can call BiaPy as follows:

# First check where is your biapy command (you need it in the below command)

# $ which biapy

# > /home/user/anaconda3/envs/BiaPy_env/bin/biapy

gpu_number="0, 1, 2"

python -u -m torch.distributed.run \

--nproc_per_node=3 \

/home/user/anaconda3/envs/BiaPy_env/bin/biapy \

--config $job_cfg_file \

--result_dir $result_dir \

--name $job_name \

--run_id $job_counter \

--gpu "$gpu_number"

nproc_per_node needs to be equal to the number of GPUs you are using (e.g. gpu_number length).

Templates

In the templates/detection folder of BiaPy, you will find a few YAML configuration templates for this workflow.

[Advanced] Special workflow configuration

Note

This section is recommended for experienced users only to improve the performance of their workflows. When in doubt, do not hesitate to check our FAQ & Troubleshooting or open a question in the image.sc discussion forum.

Advanced Parameters

Many of the parameters of our workflows are set by default to values that work commonly well. However, it may be needed to tune them to improve the results of the workflow. For instance, you may modify the following parameters

Model architecture: Select the architecture of the deep neural network used as backbone of the pipeline. Options: U-Net, Residual U-Net, Attention U-Net, SEUNet, MultiResUNet, ResUNet++, UNETR-Mini, UNETR-Small, UNETR-Base, ResUNet SE and U-NeXt V1. Safe choice: Residual U-Net.

Batch size: This parameter defines the number of patches seen in each training step. Reducing or increasing the batch size may slow or speed up your training, respectively, and can influence network performance. Common values are 4, 8, 16, etc.

Patch size: Input the size of the patches use to train your model (length in pixels in X and Y). The value should be smaller or equal to the dimensions of the image. The default value is 256 in 2D, i.e. 256x256 pixels.

Optimizer: Select the optimizer used to train your model. Options: ADAM, ADAMW, Stochastic Gradient Descent (SGD). ADAM usually converges faster, while ADAMW provides a balance between fast convergence and better handling of weight decay regularization. SGD is known for better generalization. Default value: ADAMW.

Initial learning rate: Input the initial value to be used as learning rate. If you select ADAM as optimizer, this value should be around 10e-4.

Learning rate scheduler: Select to adjust the learning rate between epochs. The current options are “Reduce on plateau”, “One cycle”, “Warm-up cosine decay” or no scheduler.

Test time augmentation (TTA): Select to apply augmentation (flips and rotations) at test time. It usually provides more robust results but uses more time to produce each result. By default, no TTA is peformed.

Problem resolution

In the detection workflows, a pre-processing step is performed where the list of points of the .csv file is transformed into point mask images. During this process some checks are made to ensure there is not repeated point in the .csv. This option is True by default with PROBLEM.DETECTION.CHECK_POINTS_CREATED so if any problem is found the point mask of that .csv will not be created until the problem is solve.

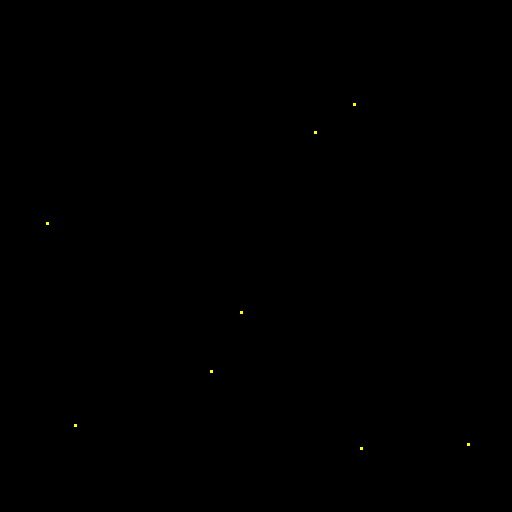

After the train phase, the model output will be an image where each pixel of each channel will have the probability (in [0-1] range) of being of the class that represents that channel. The image would be something similar to the left picture below:

Model output. |

Final points considered. |

So those probability images, as the left picture above, can be converted into the final points, as the rigth picture above. To do so you can use two possible functions (defined by TEST.DET_POINT_CREATION_FUNCTION):

The most important aspect of these options is using the threshold defined by the TEST.DET_MIN_TH_TO_BE_PEAK variable, which sets the minimum probability for a point to be considered.

CSV specifications

The CSV files used in the detection workflows are as follows:

Each row represents the middle point of the object to be detected. Each column is a coordinate in the image dimension space.

The first column name does not matter but it needs to be there. No matter also the enumeration and order for that column.

If the images are 3D, three columns need to be present and their names must be

[axis-0, axis-1, axis-2], which represent(z,y,x)axes. If the images are 2D, only two columns are required[axis-0, axis-1], which represent(y,x)axes.For multi-class detection problem, i.e.

MODEL.N_CLASSES > 1, add an additionalclasscolumn to the file. The classes need to start from1and consecutive, i.e.1,2,3,4...and not like1,4,8,6....Coordinates can be float or int but they will be converted into ints so they can be translated to pixels.

Metrics

During the inference phase, the performance of the test data is measured using different metrics if test annotations were provided (i.e. ground truth) and, consequently, DATA.TEST.LOAD_GT is True. In the case of detection, the Intersection over Union (IoU) is measured after network prediction:

IoU metric, also referred as the Jaccard index, is essentially a method to quantify the percent of overlap between the target masks (small point masks in the detection workflows) and the prediction output. Depending on the configuration, different values are calculated (as explained in Weighting options and Metric measurement). This values can vary a lot as stated in [4].

Per patch: IoU is calculated for each patch separately and then averaged.

Reconstructed image: IoU is calculated for each reconstructed image separately and then averaged. Notice that depending on the amount of overlap/padding selected the merged image can be different than just concatenating each patch.

Full image: IoU is calculated for each image separately and then averaged. The results may be slightly different from the reconstructed image.

Then, after extracting the final points from the predictions, precision, recall and F1 are defined as follows:

Precision, is the fraction of relevant points among the retrieved points. More info here.

Recall, is the fraction of relevant points that were retrieved. More info here.

F1, is the harmonic mean of the precision and recall. More info here.

The last three metrics, i.e. precision, recall and F1, use TEST.DET_TOLERANCE to determine when a point is considered as a true positive. In this process the test resolution is also taken into account.

Post-processing

After network prediction, if your data is 3D (e.g. PROBLEM.NDIM is 2D or TEST.ANALIZE_2D_IMGS_AS_3D_STACK is True), there are the following options to improve your object probabilities:

Z-filtering: to apply a median filtering in

zaxis. Useful to maintain class coherence across 3D volumes. Enable it withTEST.POST_PROCESSING.Z_FILTERINGand useTEST.POST_PROCESSING.Z_FILTERING_SIZEfor the size of the median filter.YZ-filtering: to apply a median filtering in

yandzaxes. Useful to maintain class coherence across 3D volumes that can work slightly better thanZ-filtering. Enable it withTEST.POST_PROCESSING.YZ_FILTERINGand useTEST.POST_PROCESSING.YZ_FILTERING_SIZEfor the size of the median filter.

Finally, discrete points are calculated from the predicted probabilities. Some post-processing methods can then be applied as well:

Remove close points: to remove redundant close points to each other within a certain radius (controlled by

TEST.POST_PROCESSING.REMOVE_CLOSE_POINTS). The radius value can be specified using the variableTEST.POST_PROCESSING.REMOVE_CLOSE_POINTS_RADIUS. In this post-processing is important to setDATA.TEST.RESOLUTION, specially for 3D data where the resolution inzdimension is usually less than in other axes. That resolution will be taken into account when removing points.Create instances from points: Once the points have been detected and any close points have been removed, it is possible to create instances from the remaining points. The variable

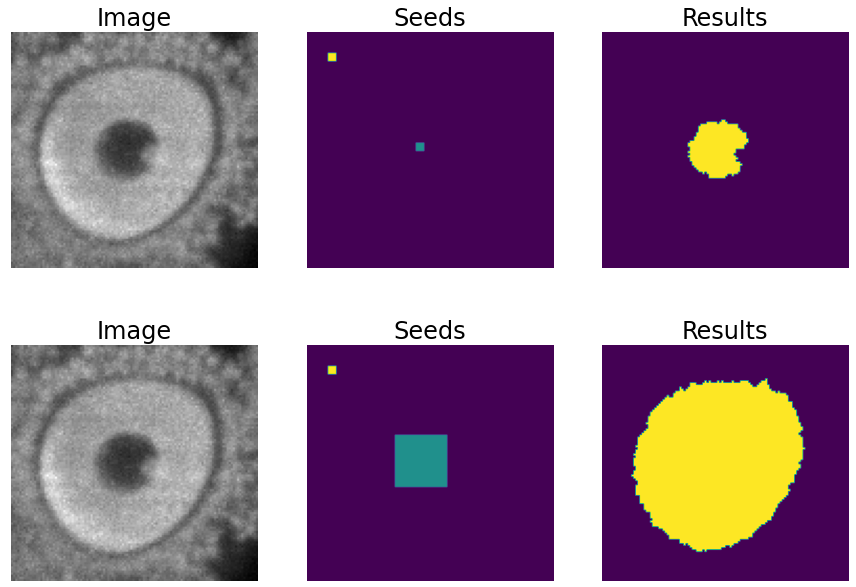

TEST.POST_PROCESSING.DET_WATERSHEDcan be set to perform this step. However, sometimes cells have low contrast in their centers, for example due to the presence of a nucleus. This can result in the seed growing to fill only the nucleus while the cell is much larger. In order to address the issue of limited growth of certain types of seeds, a process has been implemented to expand the seeds beyond the borders of their nuclei. This process allows for improved growth of these seeds. To ensure that this process is applied only to the appropriate cells, variables such asTEST.POST_PROCESSING.DET_WATERSHED_DONUTS_CLASSES,TEST.POST_PROCESSING.DET_WATERSHED_DONUTS_PATCH, andTEST.POST_PROCESSING.DET_WATERSHED_DONUTS_NUCLEUS_DIAMETERhave been created. It is important to note that these variables are necessary to prevent the expansion of the seed beyond the boundaries of the cell, which could lead to expansion into the background.

Post-processing option in the detection workflows: create instances from point detections. From left to right: raw image, initial seeds for the watershed and the resulting instances after growing the seeds. In the first row, the problem with nucleus visible type cells is depicted, where the central seed can not be grown more than the nucleus border. In the second row, the solution of dilating the central point is depicted.

Results

The results are placed in results folder under --result_dir directory with the --name given. Following the example, you should see that the directory /home/user/exp_results/my_2d_detection has been created. If the same experiment is run 5 times, varying --run_id argument only, you should find the following directory tree:

config_files: directory where the .yaml filed used in the experiment is stored.my_2d_detection.yaml: YAML configuration file used (it will be overwrited every time the code is run).

checkpoints, optional: directory where model’s weights are stored. Only created whenTRAIN.ENABLEisTrueand the model is trained for at least one epoch. Can contain:my_2d_detection_1-checkpoint-best.pth, optional: checkpoint file (best in validation) where the model’s weights are stored among other information. Only created when the model is trained for at least one epoch.normalization_mean_value.npy, optional: normalization mean value. Is saved to not calculate it everytime and to use it in inference. Only created ifDATA.NORMALIZATION.TYPEiscustom.normalization_std_value.npy, optional: normalization std value. Is saved to not calculate it everytime and to use it in inference. Only created ifDATA.NORMALIZATION.TYPEiscustom.

results: directory where all the generated checks and results will be stored. There, one folder per each run are going to be placed. Can contain:my_2d_detection_1: run 1 experiment folder. Can contain:aug, optional: image augmentation samples. Only created ifAUGMENTOR.AUG_SAMPLESisTrue.charts, optional: only created whenTRAIN.ENABLEisTrueand epochs trained are more or equalLOG.CHART_CREATION_FREQ. Can contain:my_2d_detection_1_*.png: plot of each metric used during training.my_2d_detection_1_loss.png: loss over epochs plot.

per_image, optional: only created ifTEST.FULL_IMGisFalse. Can contain:.tif files, optional: reconstructed images from patches. Created whenTEST.BY_CHUNKS.ENABLEisFalseor whenTEST.BY_CHUNKS.ENABLEisTruebutTEST.BY_CHUNKS.SAVE_OUT_TIFisTrue..zarr files (or.h5), optional: reconstructed images from patches. Created whenTEST.BY_CHUNKS.ENABLEisTrue.

full_image, optional: only created ifTEST.FULL_IMGisTrue. Can contain:.tif files: full image predictions.

per_image_local_max_check, can contain:.tif files, optional: same asper_imagebut with the final detected points in tif format. Created when no post-processing is applied.*_points.csv files, optional: Contains point locations for each test chunk.*_all_points.csv files, optional: all points of all chunks together for each test Zarr/H5 sample. Created ifTEST.BY_CHUNKS.ENABLEisTrueand no post-processing is applied.

per_image_local_max_check_post_processing, optional: only created when any detection post-processing step is enabled (i.e.TEST.POST_PROCESSING.REMOVE_CLOSE_POINTSorTEST.POST_PROCESSING.DET_WATERSHEDisTrue). Contains the same outputs asper_image_local_max_checkbut reflecting the detected points after post-processing has been applied:.tif files, optional: same asper_imagebut with the post-processed detected points overlaid in tif format.*_points.csv files, optional: point locations for each test sample or test chunk after post-processing.*_all_points.csv files, optional: all post-processed points of all chunks together for each test Zarr/H5 sample. Created ifTEST.BY_CHUNKS.ENABLEisTrue.

point_associations, optional: only if ground truth was provided by settingDATA.TEST.LOAD_GT. Can contain:.tif files, coloured associations per each matching threshold selected to be analised (controlled byTEST.MATCHING_STATS_THS_COLORED_IMG) for each test sample or test chunk. Green is a true positive, red is a false negative and blue is a false positive..csv files: false positives (_fp) and ground truth associations (_gt_assoc) for each test sample or test chunk. There is a file per each matching threshold selected (controlled byTEST.MATCHING_STATS_THS).

watershed, optional: only ifTEST.POST_PROCESSING.DET_WATERSHEDandPROBLEM.DETECTION.DATA_CHECK_MWareTrue. Can contain:seed_map.tif: initial seeds created before growing.semantic.tif: region where the watershed will run.foreground.tif: foreground mask area that delimits the grown of the seeds.

train_logs: each row represents a summary of each epoch stats. Only avaialable if training was done.tensorboard: tensorboard logs.test_results_metrics.csv: a CSV file containing all the evaluation metrics obtained on each file of the test set if ground truth was provided.

Note

Here, for visualization purposes, only my_2d_detection_1 has been described but my_2d_detection_2, my_2d_detection_3, my_2d_detection_4 and my_2d_detection_5 will follow the same structure.